Apache Argus, the Apache open source project, with it’s comprehensive security offering for today’s Hadoop installations is likely to become an important cornerstone of modern enterprise BigData architectures. It’s by today already quite sophisticate compared to other product offerings.

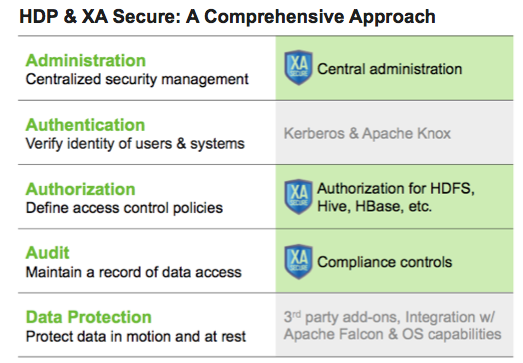

Key aspects of Argus are the Administration, Authorization, and Audit Logging covering most security demands. In the future we might even see Data Protection (encryption) as well.

Argus consists of four major components that tied together build a secure layer around your Hadoop installation. Within Argus it is the Administration Portal, a web application, that is capable of managing and accessing the Audit Server and Policy Manager, also two important components of Apache Argus. At the client side or a the Hadoop services like the HiveServer2 or the NameNode Argus installs specific agents that encapsulate requests based on the policies specified.

Argus consists of four major components that tied together build a secure layer around your Hadoop installation. Within Argus it is the Administration Portal, a web application, that is capable of managing and accessing the Audit Server and Policy Manager, also two important components of Apache Argus. At the client side or a the Hadoop services like the HiveServer2 or the NameNode Argus installs specific agents that encapsulate requests based on the policies specified.

A key aspect of Argus is, that the clients don’t have to request the Policy Server on every single client call, but are updated in a certain interval. This improves the scalability and also ensures that clients continue working even when the Policy Server is down.

A key aspect of Argus is, that the clients don’t have to request the Policy Server on every single client call, but are updated in a certain interval. This improves the scalability and also ensures that clients continue working even when the Policy Server is down.

Let’s go ahead an install a most recent version of Apache Argus using the HDP Sandbox 2.1. By installing the Policy Manager, Hive, and HDFS Agent you should have a pretty good idea of how Argus operates and a pretty solid environment to test specific use cases.

In this part we’ll only install the Policy Manager of Argus synced together with our OpenLdap installation for user and group management. We will use our kerberized HDP Sandbox throughout this post.

Getting Apache Argus

Installing Apache Argus currently involves quite some hassle. Current effort is mostly devoted to improve this with future releases. In order to get Apache Argus up and running we’ll have to check out the code, build it using maven, and upload each individual component to the appropriate nodes.

$ git clone git://git.apache.org/incubator-argus.git $ cd incubator-argus $ mvn install assembly:assembly $ scp -P 2222 target/*.tar root@localhost:argus

Preliminary Setup

Throughout this and the following posts we will demonstrate Argus capabilities of applying rule based authorization to central Hadoop services like HDFS or Hive. In order to do so we need for one thing a central user/group management stored in a central directory service like OpenLdap. The cluster should be kerberized to ensure proper authentication. OpenLdap should contain example users and groups that we can elaborate on.

You’ll find a tutorial for installing OpenLdap here. For kerberizing you can refere to this post here. As example users and groups for OpenLdap please download base.ldif, users.ldif, and groups.ldif. Make sure to create a principal for each user in the KDC:

kadmin.local -q "addprinc mktg1@MYCORP.NET" #pw: mktg1 kadmin.local -q "addprinc mktg2@MYCORP.NET" #pw: mktg2 kadmin.local -q "addprinc mktg3@MYCORP.NET" #pw: mktg3 kadmin.local -q "addprinc hr1@MYCORP.NET" #pw: hr1 kadmin.local -q "addprinc hr2@MYCORP.NET" #pw: hr2 kadmin.local -q "addprinc hr3@MYCORP.NET" #pw: hr3 kadmin.local -q "addprinc legal1@MYCORP.NET" #pw: legal1 kadmin.local -q "addprinc legal2@MYCORP.NET" #pw: legal2 kadmin.local -q "addprinc legal3@MYCORP.NET" #pw: legal3 kadmin.local -q "addprinc finance1@MYCORP.NET" #pw: finance1 kadmin.local -q "addprinc finance2@MYCORP.NET" #pw: finance2 kadmin.local -q "addprinc finance3@MYCORP.NET" #pw: finance3 kadmin.local -q "addprinc sales1@MYCORP.NET" #pw: sales1 kadmin.local -q "addprinc sales2@MYCORP.NET" #pw: sales2 kadmin.local -q "addprinc sales3@MYCORP.NET" #pw: sales3

Installing the Portal and Policy Manager

We’ll start our installing of Apache Argus by setting up the Policy Manager and Administrator Portal. In order to do so we have to untar arugs-0.4.0-admin.tar file prior to configuring and running the provided installation script.

$ tar xf argus-0.4.0-admin.tar $ cd argus-0.4.0-admin $ vi install.properties # already part of sandbox (db connector for argus) $ yum install mysql-connector-java

In install.properties it is important to point to an existing database with proper credential settings. An important aspect of the database created here is to store the policies setup by administrative users. Those policies will be cached at the client so that not every query needs to go through the database. Another benefit of caching the policies at the agents is, that the cluster continuous to be available even if the policy server is down. The only slide downside of this approach is that there is a small delay when updating or creating new policies. Usually this should not be more than 30 seconds.

Below a basic configuration for our Argus installation is provided using MySQL in combination with very basic authentication values:

$ cat install.properties #------------------------- DB CONFIG - BEGIN ---------------------------------- DB_FLAVOR=MYSQL SQL_COMMAND_INVOKER='mysql' SQL_CONNECTOR_JAR=/usr/share/java/mysql-connector-java.jar # DB password for the DB admin user-id db_root_user=root db_root_password= db_host=localhost # DB UserId used for the XASecure schema db_name=xasecure db_user=xaadmin db_password=xaadmin # DB UserId for storing auditlog infromation audit_db_name=xasecure audit_db_user=xalogger audit_db_password=xasecure # ------- PolicyManager CONFIG ---------------- policymgr_external_url=http://localhost:6080 policymgr_http_enabled=true # ------- UNIX User CONFIG ---------------- unix_user=xasecure unix_group=xasecure # Will install xasecure-ugsync package with LDAP after the policymanager installation is finished. #LDAP|ACTIVE_DIRECTORY|UNIX|NONE authentication_method=NONE remoteLoginEnabled=true authServiceHostName=localhost authServicePort=5151 # ################# DO NOT MODIFY ANY VARIABLES BELOW ######################### ....

Running install.sh from the same folder should install the Argus Policy Manager on your machine. After successfully running the script you should be able to access the administration portal by pointing your browser to http://localhost:6080 (make sure your Sandbox forwards the port correctly). If anything goes wrong dig through the error message and check your configuration if correct.

Congratulation you have a running policy manager for your Hadoop setup.

Synchronising Users and Groups Using LDAP

Synchronising Users and Groups Using LDAP

Argus can be setup to synchronise users and groups from an existing directory service. The component we’ll have to install for this is called ugsync, which probably stands for user-group synchronisation. Untar the existing archive in your agus directory.

$ tar xf argus-0.4.0-admin.tar $ cd argus-0.4.0-admin

The installation of the user-group synchronisation module follows the same pattern as the

policy manager we’ve installed previously. You should also find a install.properties file here that we are going to adjust to our needs. If you have followed the OpenLdap setup of a previous post you should be able to use the below sample configuration:

# cat install.properties ## POLICY_MGR_URL = http://policymanager.xasecure.net:6080 POLICY_MGR_URL = http://sandbox.hortonworks.com:6080 # sync source, only unix and ldap are supported at present SYNC_SOURCE = ldap # Minumum Unix User-id to start SYNC. MIN_UNIX_USER_ID_TO_SYNC = 1000 # sync interval in minutes SYNC_INTERVAL = 360 # URL of source ldap SYNC_LDAP_URL = ldap://localhost:389 # ldap bind dn used to connect to ldap and query for users and groups SYNC_LDAP_BIND_DN = cn=root,dc=mycorp,dc=net # ldap bind password for the bind dn specified above SYNC_LDAP_BIND_PASSWORD = horton CRED_KEYSTORE_FILENAME=/usr/lib/xausersync/.jceks/xausersync.jceks # search base for users SYNC_LDAP_USER_SEARCH_BASE = ou=Users,dc=mycorp,dc=net # search scope for the users, only base, one and sub are supported values SYNC_LDAP_USER_SEARCH_SCOPE = sub # objectclass to identify user entries SYNC_LDAP_USER_OBJECT_CLASS = person # optional additional filter constraining the users selected for syncing SYNC_LDAP_USER_SEARCH_FILTER = # attribute from user entry that would be treated as user name SYNC_LDAP_USER_NAME_ATTRIBUTE = cn # attribute from user entry whose values would be treated as SYNC_LDAP_USER_GROUP_NAME_ATTRIBUTE = memberof,ismemberof # UserSync - Case Conversion Flags # possible values: none, lower, upper SYNC_LDAP_USERNAME_CASE_CONVERSION=lower SYNC_LDAP_GROUPNAME_CASE_CONVERSION=lower

If setup correctly running the install.sh script should install the user-group synchronisation service. Pointing you browser to http://localhost:6080/index.html#!/users/usertab should display you the groups and users contained in LDAP.

Congratulations, again! We are now ready to create policies based on the users we have in our directory service. Left is to secure the Hadoop services using the Argus agents, what we’ll do next. See up-coming posts for more information on how to secure your Data Lake.

Congratulations, again! We are now ready to create policies based on the users we have in our directory service. Left is to secure the Hadoop services using the Argus agents, what we’ll do next. See up-coming posts for more information on how to secure your Data Lake.

Securing Your Datalake With Apache Argus – Part 1 http://t.co/PcRuiSqRZR

LikeLike

Von @jonbros: Securing Your Datalake With Apache Argus – Part 1 http://t.co/disiLY5XYw #IronBloggerMUC

LikeLike

I am seeing a permissions error. Any ideas?

c:ranger>git clone git://git.apache.org/incubator-argus.git

Cloning into ‘incubator-argus’…

fatal: remote error: access denied or repository not exported: /incubator-argus.

git

c:ranger>

LikeLike

Got it, the repo has changed to incubator-ranger. I am not sure if the project changed, or that is a HortonWorks thing.

LikeLike

Steve, Apache Argus changed/renamed to Apache Ranger.

Best to download HDP 2.2 Sandbox and try the included Ranger tutorials, if you want to demo.

Let me know if you need any assistance

LikeLike