Apache Kafka is a distributed system designed for streams that is often being categorized as a messaging system but provides a fundamentally different abstraction, although it serves a similar role. The key abstraction of Kafka to keep in mind is a structured commit log of events. With events being any kind of system, user, or machine emitted data. Kafka is built to:

- Fault-tolerant

- High throughput

- Horizontally scalable

- Allow geographically distributing data streams and processing.

A constantly growing number of data generated at today companies is event data. While there is an approach to combine machine generated data under the umbrella term of

Internet-of-Things (IoT) it is crucial to understand that business is inherently event driven.

A purchase, a customer claim or registration are just examples of such events. Business is interactive. When analyzing the data time matters. Most of this data has it’s highest value when analyzed close to or even in real-time.

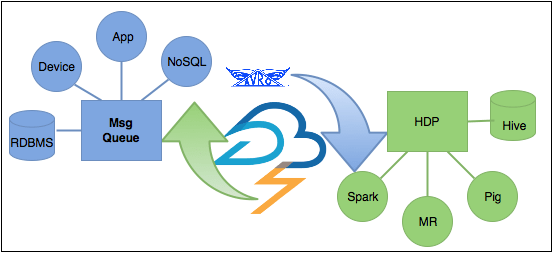

Apache Kafka was created to solve two main problems that arise from the ever increasing demand for stream data processing. Designed for reliability Kafka is capable of scaling against the growing demand for events passing. Secondly Kafka can interact with various applications and platform for the same events, which helps to orchestrated today’s complex architectures providing a central message hub for each system. Today chances are that all that data will end up in Hadoop for further or even real time analyses making Kafka a queue to Hadoop. Continue reading “Apache Kafka: Queuing for Hadoop” →